Shadow AI: The Risk Hiding in Your Own Company

Shadow AI is what happens when people in your company use AI tools — ChatGPT, Claude, Gemini, Copilot — without telling anyone. No policy, no approval, no oversight. Just open a tab and get on with the day.

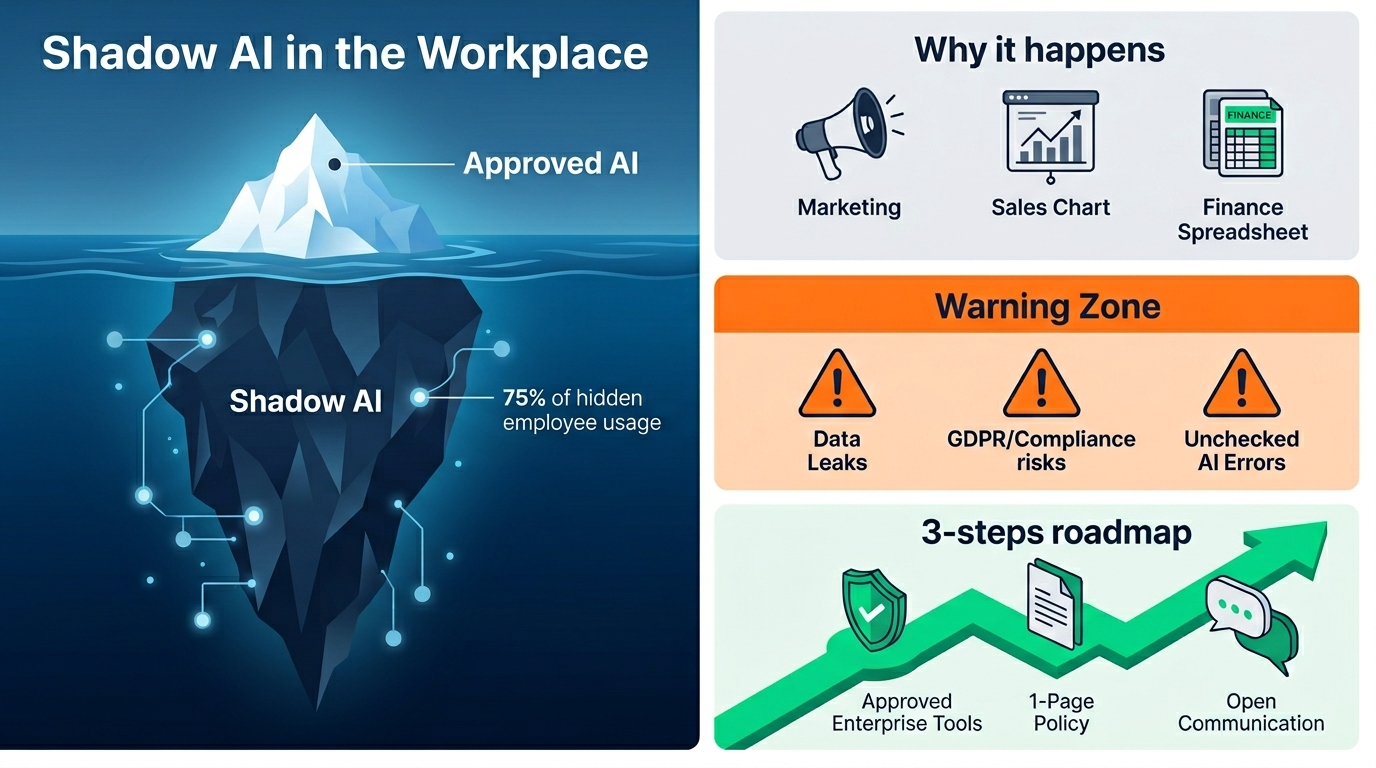

It's everywhere. Surveys keep landing on the same number: somewhere between half and three quarters of employees in any given company are using AI tools their employer doesn't know about. In a 20-person business, that's a lot of unsupervised activity.

And most leaders haven't really clocked it yet.

Why it happens

It's not malicious. Most people aren't trying to break rules. They've found a tool that makes a frustrating part of their job easier, and they're using it. Marketing pastes a draft into ChatGPT to tighten it up. Sales asks Claude to summarize a long email thread before a meeting. Someone in finance gets help reformatting a spreadsheet.

Nobody asked. Nobody told them not to. So they kept going.

Why it matters

The problem isn't that people use AI. The problem is what goes into it.

Customer data. Internal financials. Employee information. Contract drafts. Source code. All of it pasted into tools your IT team has never reviewed, hosted by companies you don't have a data agreement with. Once it's in there, you don't really know where it ends up.

For a Norwegian SMB, that's a GDPR problem waiting to happen. For any business, it's a confidentiality problem.

There's also a quieter risk. Output from AI gets used in customer-facing work without anyone checking it. Bad facts. Made-up numbers. Tone that doesn't match the brand. The mistakes are small individually. They add up over a year.

What not to do

Don't ban it. Banning AI in 2026 is like banning Google in 2010. People will just use it anyway, more carefully hidden, and you lose any chance of seeing what's happening.

Don't pretend it's fine. "We trust our people" isn't a policy. It's a hope.

What to actually do

Three things. Not complicated.

- Pick a sanctioned tool. Microsoft 365 Copilot, ChatGPT Enterprise, Claude for Work — something with a proper data agreement and admin controls. Roll it out to the team. Now they have a place to use AI that isn't a personal account.

2. Write one page of guidelines. What's allowed. What's not. What kind of data never goes into these tools. Keep it short enough that people will actually read it.

3. Talk about it. Ask the team what they're already using. You'll learn more in a 20-minute meeting than in any audit. People are usually relieved to have permission to be honest.

That's it. Shadow AI doesn't go away by ignoring it. It goes away when you give people a better, sanctioned option and treat them like adults about it.

Worried about what's happening in your own company? Get in touch.